Preface Fifth Edition

The fifth edition of Computer Systems offers a clear, detailed, step-by-step exposition of the central ideas in computer organization, assembly language, and computer architecture. The book is based in large part on a virtual computer, Pep/9, which is designed to teach the basic concepts of the classic von Neumann machine. The strength of this approach is that the central concepts of computer science are taught without getting entangled in the many irrelevant details that often accompany such courses. This approach also provides a foundation that encourages students to think about the underlying themes of computer science. Breadth is achieved by emphasizing computer science topics that are related to, but not usually included in, the treatment of hardware and its associated software.

Summary of Contents

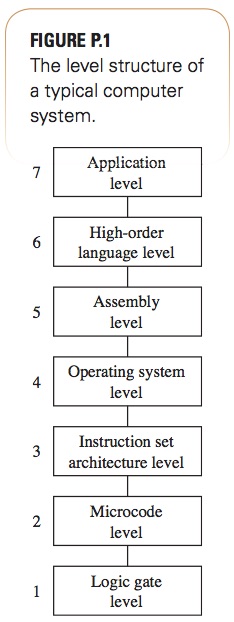

Computers operate at several levels of abstraction; programming at a high level of abstraction is only part of the story. This book presents a unified concept of computer systems based on the level structure of FIGURE P.1.

The book is divided into seven parts, corresponding to the seven levels of FIGURE P.1:

- Level App7 — Applications

- Level HOL6 — High-order languages

- Level ISA3 — Instruction set architecture

- Level Asmb5 — Assembly

- Level OS4 — Operating system

- Level LG1 — Logic gate

- Level Mc2 — Microcode

The text generally presents the levels top-down, from the highest to the lowest. Level ISA3 is discussed before Level Asmb5, and Level LG1 is discussed before Level Mc2 for pedagogical reasons. In these two instances, it is more natural to revert temporarily to a bottom-up approach so that the building blocks of the lower level will be in hand for construction of the higher level.

Level App7

Level App7 is a single chapter on application programs. It presents the idea of levels of abstraction and binary information and establishes the framework for the remainder of the book. A few concepts of relational databases are presented as an example of a typical computer application.

Level HOL6

Level HOL6 consists of one chapter, which reviews the C programming language. The chapter assumes that the student has experience in some imperative language, such as Java or Python—not necessarily C. The instructor can readily translate the C examples to other common Level HOL6 languages if necessary.

This chapter emphasizes the C memory model, including global versus local variables, functions with parameters, and dynamically allocated variables. The topic of recursion is treated because it depends on the mechanism of memory allocation on the run-time stack. A fairly detailed explanation is given on the details of the memory allocation process for function calls, as this mechanism is revisited at a lower level of abstraction later in the book.

Level ISA3

Level ISA3 is the instruction set architecture level. Its two chapters describe Pep/9, a virtual computer designed to illustrate computer concepts. Pep/9 is a small complex instruction set computer (CISC); a von Neumann machine. The central processing unit (CPU) contains an accumulator, an index register, a program counter, a stack pointer, and an instruction register. It has eight addressing modes: immediate, direct, indirect, stack-relative, stack-relative deferred, indexed, stack-indexed, and stack-deferred indexed. The Pep/9 operating system, in simulated read-only memory (ROM), can load and execute programs in hexadecimal format from students’ text files. Students run short programs on the Pep/9 simulator and learn that executing a store instruction to ROM does not change the memory value.

Students learn the fundamentals of information representation and computer organization at the bit level. Because a central theme of this book is the relationship of the levels to one another, the Pep/9 chapters show the relationship between the ASCII representation (Level ISA3) and C variables of type char (Level HOL6). They also show the relationship between two’s complement representation (Level ISA3) and C variables of type int (Level HOL6).

Level Asmb5

Level Asmb5 is the assembly level. The text presents the concept of the assembler as a translator between two levels—assembly and machine. It introduces Level Asmb5 symbols and the symbol table.

The unified approach really comes into play here. Chapters 5 and 6 present the compiler as a translator from a high-order language to assembly language. Previously, students learned a specific Level HOL6 language, C, and a specific von Neumann machine, Pep/9. These chapters continue the theme of relationships between the levels by showing the correspondence between (a) assignment statements at Level HOL6 and load/store instructions at Level Asmb5, (b) loops and if statements at Level HOL6 and branching instructions at Level Asmb5, (c) arrays at Level HOL6 and indexed addressing at Level Asmb5, (d) procedure calls at Level HOL6 and the run-time stack at Level Asmb5, (e) function and procedure parameters at Level HOL6 and stack-relative addressing at Level Asmb5, (f) switch statements at Level HOL6 and jump tables at Level Asmb5, and (g) pointers at Level HOL6 and addresses at Level Asmb5.

The beauty of the unified approach is that the text can implement the examples from the C chapter at this lower level. For example, the run-time stack illustrated in the recursive examples of Chapter 2 corresponds directly to the hardware stack in Pep/9 main memory. Students gain an understanding of the compilation process by translating manually between the two levels.

This approach provides a natural setting for the discussion of central issues in computer science. For example, the book presents structured programming at Level HOL6 versus the possibility of unstructured programming at Level Asmb5. It discusses the goto controversy and the structured programming/efficiency tradeoff, giving concrete examples from languages at the two levels.

Chapter 7, “Language Translation Principles,” introduces students to computer science theory. Now that students know intuitively how to translate from a high-level language to assembly language, we pose the fundamental question underlying all of computing: What can be automated? The theory naturally fits in here because students now know what a compiler (an automated translator) must do. They learn about parsing and finite-state machines—deterministic and nondeterministic—in the context of recognizing C and Pep/9 assembly language tokens. This chapter includes an automatic translator between two small languages, which illustrates lexical analysis, parsing, and code generation. The lexical analyzer is an implementation of a finite-state machine. What could be a more natural setting for the theory?

Level OS4

Level OS4 consists of two chapters on operating systems. Chapter 8 is a description of process management. Two sections, one on loaders and another on trap handlers, illustrate the concepts with the Pep/9 operating system. Seven instructions have unimplemented opcodes that generate software traps. The operating system stores the process control block of the user’s running process on the system stack, and the interrupt service routine interprets the instruction. The classic state transition diagram for running and waiting processes in an operating system is thus reinforced with a specific implementation of a suspended process. The chapter concludes with a description of concurrent processes and deadlocks. Chapter 9 describes storage management, both main memory and disk memory.

Level LG1

Level LG1 uses two chapters to present combinational and sequential circuits. Chapter 10 emphasizes the importance of the mathematical foundation of computer science by starting with the axioms of Boolean algebra. It shows the relationship between Boolean algebra and logic gates and then describes some common logic devices, including a complete logic design of the Pep/9 arithmetic logic unit (ALU). Chapter 11 illustrates the fundamental concept of a finite-state machine through the state transition diagrams of sequential circuits. It concludes with a description of common computer subsystems such as bidirectional buses, memory chips, and two-port memory banks.

Level Mc2

Chapter 12 describes the microprogrammed control section of the Pep/9 CPU. It gives the control sequences for a few sample instructions and addressing modes and provides a large set of exercises for the others. It also presents concepts of load/store architectures, contrasting the MIPS reduced instruction set computer (RISC) machine with the Pep/9 CISC machine. It concludes with performance issues by describing cache memories, pipelining, dynamic branch prediction, and superscalar machines.

This book offers such broad coverage that instructors may wish to omit some of the material when designing a course. I use Chapters 1–7 in a computer systems course and Chapters 10–12 in a computer organization course.

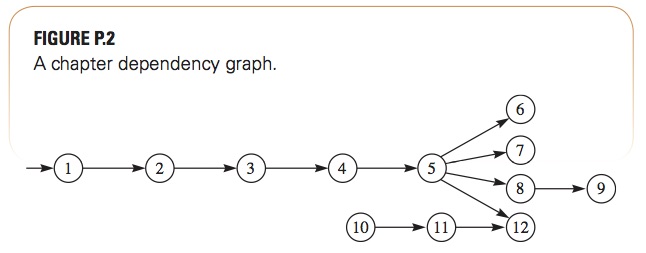

In the book, Chapters 1–5 must be covered sequentially. Chapters 6 (“Compiling to the Assembly Level”) and 7 (“Language Translation Principles”) can be covered in either order. I often skip ahead to Chapter 7 to initiate a large software project, writing an assembler for a subset of Pep/9 assembly language, so students will have sufficient time to complete it during the semester. Chapter 11 (“Sequential Circuits”) is obviously dependent on Chapter 10 (“Combinational Circuits”), but neither depends on Chapter 9 (“Storage Management”), which may be omitted. FIGURE P.2, a chapter dependency graph, summarizes the possible chapter omissions.

Improvements are in every chapter of this edition, and fall into one of two categories: a change in the virtual machine from Pep/8 to Pep/9 and other changes in content. The changes in content are too numerous to list, but major changes include the following:

- HOL6 language—This edition changes the HOL6 language from C++ to C. The C language is more common as a systems programming language and is more appropriate for a computer systems text. The previous edition refers to the memory model of C++ consisting of global variables allocated at a fixed location in memory, local variables and parameters allocated on the run-time stack, and dynamic variables allocated from the heap. But C++ is an OO language with a more complex memory model. The above model more accurately describes C than C++. The switch is visible in three places. Input/ output in the example programs use

scanf()andprintf()instead ofcinandcout. The pass-by-reference mechanism in C has the address operator&explicit in the actual parameter with a corresponding pointer type in the formal parameter. Memory allocation from the heap usesmallocinstead ofnew. - Sidebars—In this edition, the brief biographies formerly found in each chapter have been replaced with a different set of sidebars. Each sidebar is a real-world example of the concepts described in that chapter. As most of the chapters describe the Pep/9 virtual machine, the sidebars for those chapters show some corresponding implementations for the Intel x86 architecture. The new sidebars give a consistent running example of this architecture so students have a better idea of how the concepts of the virtual machine relate to real-world implementations.

- New and expanded topics—Chapter 1 now emphasizes how binary information is quantified in both space and time by presenting the performance equation and the concept of bandwidth. QR codes and color displays are detailed examples of these concepts. Chapter 3 describes Unicode as well as UTF-32 and UTF-8 encoding. Chapter 4 discusses big-endian and little-endian order. Chapter 7 uses Java instead of C++ as the implementation language for the example translator. The microcode examples in Chapter 12 use the new

UnitPreandUnitPostfeature of the Pep/9 CPU simulator. The two-byte data bus now has simulator support, and that topic leads to an extended discussion of memory alignment issues and the new.ALIGNassembler directive.

Pep/8, the virtual machine for the two previous editions, has been superseded by Pep/9. As the instruction sets of the two machines are different, Pep/8 source and object programs are not compatible with those of Pep/9. Only a few instructions are affected, however, so much remains the same, including the set of eight addressing modes. The changes for Pep/9 include the following:

- Replacement of

RETnwithRET—In Pep/8, there are eight versions of the return statement,RET0,RET1, …,RET7.RETndeallocates n bytes from the run-time stack and then executes a return from a function call. The reasoning is that returns are always preceded by a deallocation of local variables, so programs are shorter if the assembly language programmer is not required to write anADDSPinstruction to explicitly deallocate the locals before the return.While this ISA design may be justified on architectural principles, it turned out to be deficient pedagogically. The problem is that students must learn two different concepts: the deallocation mechanism for data and the return mechanism for flow of control. Combining these two different concepts into one statement can be confusing during the learning process. Pep/9 now requires students to explicitly deallocate locals with theADDSPstatement. An added stylistic advantage is that the explicitADDSPto deallocate locals at the end of a function corresponds directly to theSUBSPat the beginning of the function to allocate locals. - Memory-mapped I/O—Of all the instructions in the Pep/8 instruction set, the most unrealistic are

CHARIandCHAROfor character input and output. Most real computer systems map input and output devices to main memory, which is now the design of Pep/9. In the new instruction set, there are no native input and output instructions. Instead, the Pep/8 instructionCHARI alpha,adis replaced by the Pep/9 instructions

LDBA charIn,d ;Load byte to A from charIn STBA alpha,ad ;Store byte from A to alphawhere

charInis the input device. The Pep/8 instructionCHARO beta,adis replaced by the Pep/9 instructions

LDBA beta,ad ;Load byte to A from beta STBA charOut,d ;Store byte to charOutwhere

charOutis the output device.In the above code fragments,

adrepresents any valid addressing mode for the instruction. SymbolscharInandcharOutare defined in the Pep/9 operating system and stored as machine vectors at the bottom of memory. Their values are included automatically in the symbol table of the assembler.One disadvantage of memory-mapped I/O is that every

CHARIandCHAROstatement in a Pep/8 program must now be written as two statements, making programs longer. This disadvantage is mitigated by the fact that the trap instructionsDECI,DECO, andSTROwork as before, as the native I/O statements are hidden inside their trap routines.The advantage is that students learn firsthand how memory-mapped I/O works by loading from the input device address and storing to the output device address. This requirement also illustrates the concept and the use of the memory map, a topic that students have a tendency to avoid with Pep/8. There is also a nice connection with the example in Chapter 11 on address decoding that shows how to wire an eight-port I/O chip into the memory map.

- New native instruction

CPBr—In Pep/8, byte quantities must be compared withCPr, which compares two-byte quantities. Consequently, the high-order byte of the comparison must be considered, sometimes by clearing the high-order byte of the register before the comparison is made. The resulting assembler code for doing byte comparisons is convoluted.CPBris a new compare byte instruction that sets the status bits without regard to the high-order byte of the register. The resulting code is simpler to understand and to write.

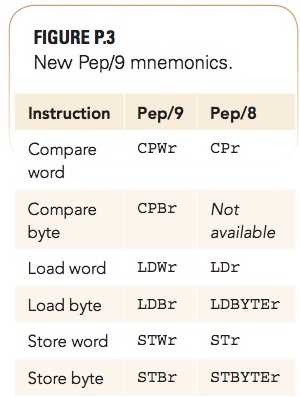

- Improved mnemonics—Pep/9 renames the mnemonics for the compare, load, and store instructions, as FIGURE P.3 shows. The new scheme retains the letters

CPfor compare,LDfor load, andSTfor store; however, the scheme is now consistent in using the lettersWfor word, which is now required, andBfor byte with this group of instructions. Not only is this naming convention more consistent, but there is a tendency for students to forget the meaning of a word (two bytes in the Pep computers). Including the letterWin the mnemonics for the two-byte instructions reinforces the meaning of “word.” - New trap instruction

HEXO—Pep/9 eliminates theNOP2andNOP3trap instructions from the instruction set, which, together with the elimination of theRETnand character I/O instructions, allows the inclusion of another nonunary trap instruction.HEXO, which stands for hexadecimal output, was available in Pep/7 and is resurrected in Pep/9. It outputs a word as four hexadecimal characters. - Addressing mode nomenclature—Pep/9 changes the name stack-indexed deferred addressing to stack-deferred indexed addressing and the corresponding assembler notation from

sxftosfx. This change more accurately reflects the semantics of the addressing mode, as the stack-deferred operation happens before the index operation. - Expanded MIPS coverage—The MIPS architecture continues to be the RISC model contrasted with the Pep/9 CISC model. Its coverage is expanded with a more extensive and systematic description of all the MIPS addressing modes and instruction types and their implementation at the LG1 level. This edition also includes a more extensive exposition of RISC design principles, as illustrated by the MIPS architecture.

Computer Systems has several unique features that differentiate it from other texts on computer systems, assembly language, and computer organization.

- Conceptual approach—Many textbooks attempt to stay abreast of the field by including the latest technological developments, such as communication protocol specifications for the newest peripheral devices. They typically have how-this-device-works narrative explanations throughout. This text eschews such material in favor of selecting only those computing concepts that are fundamental, the mastery of which provides a basis for understanding both current and future technology. For instance, in mastering the concept of the space/time tradeoff, it is more important for students to experience the ideas with digital circuit design problems than to simply read about them in general. As another example, the concept of hardware parallelism is mastered best by learning how to combine cycles in a microcode implementation of an ISA instruction.

- Problem-solving emphasis—Students retain less when they only hear about or read about a subject. They retain more when they experience the subject. Computer Systems reflects this emphasis through the nearly 400 problem-solving exercises at the end of the chapters, many with multiple parts. Rather than ask the student to repeat verbiage from the text, the exercises require quantitative answers, or the analysis or design of a program or digital circuit at one of the levels of abstraction of the system.

- Consistent machine model—The Pep/9 machine, a small CISC computer, is the vehicle for describing all the levels of the system. Students clearly see the relation between the levels of abstraction because they either program or design digital circuits for that machine at all the levels. For example, when they design an ALU component at the LG1 level, they know where the ALU fits in the implementation of the ISA3 level. They learn the difference between an optimizing and nonoptimizing compiler by translating C programs to assembly language as a compiler would. Using the same machine model for these learning activities at the different levels is a huge productivity advantage because the model is consistent from top to bottom. However, Computer Systems also presents the MIPS machine to contrast RISC design principles with microprogrammed CISC designs.

- Complete program examples—Many computer organization and assembly language texts suffer from the code fragment syndrome. The memory model, addressing modes, and I/O features of Pep/9 enable students to write complete programs that can be easily executed and tested without resorting just to code fragments. Real machines, and especially RISC machines, have complex function-calling protocols involving issues like register allocation, register spillover, and memory alignment constraints. Pep/9 is one of the few pedagogic machines—perhaps the only one—that permits students to write complete programs with input and output using the following: global and local variables, global and local arrays, call by value and by reference, array parameters, switch statements with jump tables, recursion, linked structures with pointers, and the heap. Assignments to write complete programs further the goal of learning by doing, as opposed to learning by reading code fragments.

- Integration of theory and practice—Some readers observe that Chapter 7 on language translation principles is unusual in a computer systems book. This observation is a sad commentary on the gulf between theory and practice in computer science curricula and perhaps in the field of computer science itself. Because the text presents the C language at Level HOL6, assembly language at Level Asmb5, and machine language at Level ISA3, and has as one goal understanding the relationship between the levels, a better question is: How could a chapter on language translation principles not be included? Computer Systems incorporates theory whenever possible to bolster practice. For example, it presents Boolean algebra as an axiomatic system with exercises for proving theorems.

- Breadth and depth—The material in Chapters 1–6 is typical for books on computer systems or assembly language programming, and that in Chapters 8–12 for computer organization. Combining this breadth of material into one volume is unique and permits a consistent machine model to be used throughout the levels of abstraction of the complete system. Also unique is the depth of coverage at the digital circuit LG1 level, which takes the mystery out of the component parts of the CPU. For example, Computer Systems describes the implementations of the multiplexers, adders, ALUs, registers, memory subsystems, and bidirectional buses for the Pep/9 CPU. Students learn the implementation down to the logic gate level, with no conceptual holes in the grand narrative that would otherwise have to be taken on faith without complete understanding.

Computer Systems answers the question: What is the place of assembly language programming and computer organization in the computer science curriculum? It is to provide a depth of understanding about the architecture of the ubiquitous von Neumann machine. This text retains its unique goal to provide a balanced overview of all the main areas of the field, including the integration of software and hardware and the integration of theory and practice.

The first edition of Computer Systems was published in 1999. From the beginning, its goal was to unify the presentation of computer systems using a single virtual machine, Pep/6 in the first edition, to illustrate the relationships between all seven levels of abstraction in a typical computer system. The text cut across many of the standard courses at the time (and even now), taking a breadth approach and, in so doing, sacrificing the depth of traditional details taught in standard assembly language, operating systems, computer architecture, computer organization, and digital circuit design courses.

The latest joint ACM/IEEE curriculum guidelines, Computer Science Curricula 2013 (CS2013), note the curriculum challenges arising from the rapid expansion of the field:

The growing diversity of topics potentially relevant to an education in Computer Science and the increasing integration of computing with other disciplines create particular challenges for this effort. Balancing topical growth with the need to keep recommendations realistic and implementable in the context of undergraduate education is particularly difficult.

One response to the challenge is the evolution of the guidelines in precisely the direction that Computer Systems has occupied since its first edition. CS2013 restructures previous “bodies of knowledge,” deleting some old areas and creating some new ones. One of the new knowledge areas (KAs) of the guidelines is Systems Fundamentals:

In previous curricular volumes, the interacting layers of a typical computing system, from hardware building blocks, to architectural organization, to operating system services, to application execution environments … were presented in independent knowledge areas. The new Systems Fundamentals KA presents a unified systems perspective and common conceptual foundation for other KAs …

The goal of the new Systems Fundamentals KA in CS2013 is identical to the goal of Computer Systems, namely to present a “unified systems perspective” and a common conceptual foundation for other topics of computer science. Computer Systems is a mature text that uniquely satisfies this important new goal of the latest computer science curriculum guidelines.

The support material listed below is available from the publisher’s website (go.jblearning.com/warford5e).

Pep/9 Assembler and Simulator The Pep/9 machine is available for MS Windows, Mac OS X, and Unix/Linux systems. The assembler features the following:

- An integrated text editor

- Error messages that are inserted within the source code at the place where the error is detected

- Student-friendly machine language object code in hexadecimal format

- The ability to code directly in machine language, bypassing the assembler

- The ability to redefine the mnemonics for the unimplemented opcodes that trigger synchronous traps

The simulator features the following:

- Simulated ROM that is not altered by store instructions

- A small operating system burned into simulated ROM that includes a loader and a trap handler system

- An integrated debugger that allows for breakpoints, single-step execution, CPU tracing, and memory tracing

- The option to trace an application, the loader, or the operating system in any combination

- The ability to recover from endless loops

- The ability to modify the operating system by designing new trap handlers for the unimplemented opcodes

- Every example from the text built into the application, making it a useful tool for class demonstrations

Pep/9 CPU Simulator A CPU simulator, also available for MS Windows, Mac OS X, and Unix/Linux systems, is available for use in the computer organization course. The CPU simulator features the following:

- Color-coded display paths that trace the data flow, depending on control signals to the multiplexers

- A single-cycle mode of operation with graphical user interface inputs for each control signal and instant visual display of the effects of the signal

- A multi cycle mode of operation with an integrated text editor for the student to write Mc2 microcode sequences and execute them to implement ISA3 instructions

- New to this edition, a set of unit tests for every example and every microcode problem built into the application

Lecture Slides A complete set of about 50 to 125 lecture slides per chapter is available in PDF format. The slides include every figure from the text as well as summary information, often in bullet-point format. They do not, however, include many examples, so they leave room for instructor presentation of examples and instructor-led discussions.

Exam Handouts A set of exam handouts, including reference information such as the ASCII table, instruction set tables, etc., are provided for reference during exams and study sessions.

Digital Circuit Labs A set of six digital circuit labs provides hands-on experience with physical breadboards. The labs illustrate the combinational and sequential devices from Chapters 10 and 11 with many circuits that are not in the book. Students learn practical digital design and implementation concepts that are beyond the scope of the text itself. They follow the sequence of topics from the text, beginning with combinational circuits and progressing through sequential circuits and ALUs.

Solutions Manual Solutions to selected exercises are provided in an appendix. Solutions to all the exercises and problems are available to instructors who adopt the book.